Today in 1936, Alan Turing delivered to the London Mathematical Society his paper “On Computable Numbers, with an Application to the Entscheidungsproblem.” In the paper, Turing described the Universal Machine, which later became known as the Turing Machine. This was an idealized computing device that is capable of performing any mathematical computation that can be represented as an algorithm. Turing argued that cannot exist any universal method of decision and, hence, mathematics will always contain undecidable (as opposed to unknown) propositions.

The paper greatly influenced, in the subsequent decade, the advent of modern computer programming. Turing: “It is always possible for the computer to break off from his work, to go away and forget all about it, and later to come back and go on with it. If he does this he must leave a note of instructions (written in some standard form) explaining how the work is to be continued … The note of instructions must enable him to carry out one step and write the next note. Thus the state of progress of the computation at any stage is completely determined by the note of instructions and the symbols on the tape.“

The Turing Machine, as you may know, consists of a head scanning and modifying symbols on an infinite tape in accordance with a set of rules. What is less widely realized (until you read the entire article) is that this tape is simply a one-dimensional simplification of the square ruled paper that a human child would use to do sums at school; and the internal states of the machine are analogous to the state of mind of the human. I could hardly believe it: Turing, only 25 years old, was inventing the computer by deconstructing the mind!

Compare this to Charles Babbage, the inventor of the cogwheel-based Analytical Engine a century earlier. Babbage was attempting to build a far more complex computing engine than Turing’s abstract model, but was doing it by designing explicit mechanisms to carry out each of the required mathematical and logical operations. He did ingenious work, yet his approach had nothing to do with the human brain. Score one for Alan Turing.

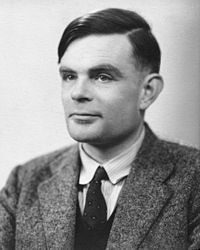

See also Alan Turing 1912-1954

For another contrast with Turing, consider Konrad Zuse… see http://www.nathanzeldes.com/blog/2013/10/konrad-zuse-alan-turing-worlds-first-computer-startup/

LikeLike