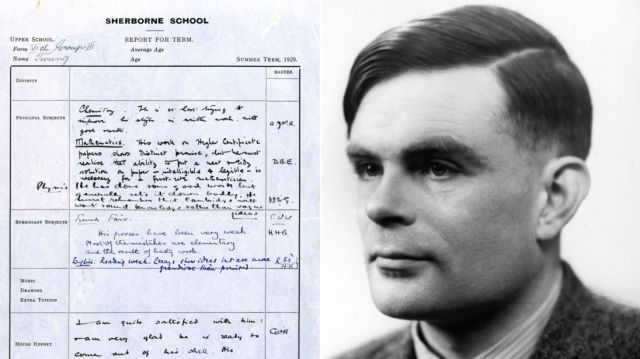

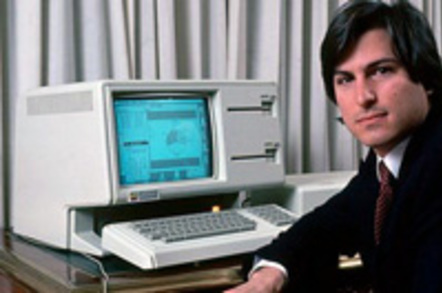

Ida M. Fuller with the first social security check.

Two of this week’s milestones in the history of technology highlight the evolution in the use of computing machines from supporting social security to boosting social cohesion and social anxiety.

On January 31, 1940, Ida M. Fuller became the first person to receive an old-age monthly benefit check under the new Social Security law. Her first check was for $22.54. The Social Security Act was signed into law by Franklin Roosevelt on August 14, 1935. Kevin Maney in The Maverick and His Machine: “No single flourish of a pen had ever created such a gigantic information processing problem.”

But IBM was ready. Its President, Thomas Watson, Sr., defied the odds and during the early years of the Depression continued to invest in research and development, building inventory, and hiring people. As a result, according to Maney, “IBM won the contract to do all of the New Deal’s accounting – the biggest project to date to automate the government. … Watson borrowed a common recipe for stunning success: one part madness, one part luck, and one part hard work to be ready when luck kicked in.”

The nature of processing information before computers is evident in the description of the building in which the Social Security administration was housed at the time:

The most prominent aspect of Social Security’s operations in the Candler Building was the massive amount of paper records processed and stored there. These records were kept in row upon row of filing cabinets – often stacked double-decker style to minimize space requirements. One of the most interesting of these filing systems was the Visible Index, which was literally an index to all of the detailed records kept in the facility. The Visible Index was composed of millions of thin bamboo strips wrapped in paper upon which specialized equipment would type every individual’s name and Social Security number. These strips were inserted onto metal pages which were assembled into large sheets. By 1959, when Social Security began converting the information to microfilm, there were 163 million individual strips in the Visible Index.

On January 1, 2011, the first members of the baby boom generation reached retirement age. The number of retired workers is projected to grow rapidly and will double in less than 30 years. People are also living longer, and the birth rate is low. As a result, the ratio of workers paying Social Security taxes to people collecting benefits will fall from 3.0 to 1 in 2009 to 2.1 to 1 in 2031.

In 1955, the 81-year-old Ida Fuller (who died on January 31, 1975, aged 100, after collecting $22,888.92 from Social Security monthly benefits, compared to her contributions of $24.75) said: “I think that Social Security is a wonderful thing for the people. With my income from some bank stock and the rental from the apartment, Social Security gives me all I need.”

Sixty-four years after the first social security check was issued, paper checks were replaced by online transactions and letters as the primary form of person-to-person communications were replaced by web-based social networks.

On February 4, 2004, Facebook was launched when Thefacebook.com went live at Harvard University. Its home screen read, says David Kirkpatrick in The Facebook Effect, “Thefacebook is an online directory that connects people though social networks at colleges.” Zuckerberg’s classmate Andrew McCollum designed a logo using an image of Al Pacino he’s found online that he covered with a fog of ones and zeros.

Four days after the launch, more than 650 students had registered and by the end of May, it was operating in 34 schools and had almost 100,000 users. “The nature of the site,” Zuckerberg told the Harvard Crimson on February 9, “is such that each user’s experience improves if they can get their friends to join in.” In late 2017, Facebook had 2.07 billion monthly active users.

Successfully connecting more than a third of the world’s adult (15+) population brings a lot of scrutiny. “Is Social Media the Tobacco Industry of the 21st Century?” asked an article on Forbes.com recently, summing up the current sentiment about Facebook.

The most discussed complaint is that Facebook is bad for democracy, aiding and abetting the rise of “fake news” and “echo chambers.”

Why blame the network for what its users do with it? And how exactly what American citizens do with it impact their freedom to vote in American elections?

Consider the social network of the 18th century. On November 2, 1772, the town of Boston established a Committee of Correspondence as an agency to organize a public information network in Massachusetts. The Committee drafted a pamphlet and a cover letter which it circulated to 260 Massachusetts towns and districts, instructing them in current politics and inviting each to express its views publicly. In each town, community leaders read the pamphlet aloud and the town’s people discussed, debated, and chose a committee to draft a response which was read aloud and voted upon.

When 140 towns responded and their responses published in the newspapers, “it was evident that the commitment to informed citizenry was widespread and concrete” according to Richard D. Brown (in Chandler and Cortada, eds., A Nation Transformed by Information). But why this commitment?

In Liah Greenfeld‘s words (in Nationalism: Five Roads to Modernity), “Americans had a national identity… which in theory made every individual the sole legitimate representative of his own interests and equal participant in the political life of the collectivity. It was grounded in the values of reason, equality, and individual liberty.”

The Internet is not “inherently” democratizing, no more than the telegraph ever was and no matter how much people have always wanted to believe in the power of technology to transform society. Believing in and upholding the right values for a long period of time—individual liberty and responsibility of informed citizenry making its own decisions while debating, discussing, and sharing information (and mis-information)—is what makes societies democratic.

More than a century after the establishment of the first social network in the U.S., the citizenry was informed (and mis-informed) by hundreds of newspapers, mostly sold by newspaper boys on the streets. After paying a visit to the United States, Charles Dickens described (in Martin Chuzzlewit, 1844) the newsboys greeting a ship in New York Harbor: “’Here’s this morning’s New York Stabber! Here’s the New York Family Spy! Here’s the New York Private Listener! … Here’s the full particulars of the patriotic loco-foco movement yesterday, in which the whigs were so chawed up, and the last Alabama gauging case … and all the Political, Commercial and Fashionable News. Here they are!’ … ‘It is in such enlightened means,’ said a voice almost in Martin’s ear, ‘that the bubbling passions of my country find a vent.’”

Another visitor from abroad, the Polish writer Henryk Sienkiewicz, could discern (in Portrait of America, 1876) in the mass circulation of newspapers, the American belief about the universal need for information: “In Poland, a newspaper subscription tends to satisfy purely intellectual needs and is regarded as somewhat of a luxury which the majority of the people can heroically forego; in the United States a newspaper is regarded as a basic need of every person, indispensable as bread itself.”

Basic need for information, of all kinds, as Mark Twain observed (in Pudd’nhead Wilson, 1897): “The old saw says, ‘Let a sleeping dog lie.’ Right. Still, when there is much at stake, it is better to get a newspaper to do it.”

Blaming Facebook for fake news is like blaming the newspaper boys for the fake news the highly partisan 19th century newspapers were in the habit of publishing. Somehow, American democracy survived.

Consider a more recent fact: According to a Gallup/Knight Foundation survey, the American public divides evenly on the question of who is primarily responsible for ensuring people have an accurate and politically balanced understanding of the news—48% say the news media (“by virtue of how they report the news and what stories they cover”) and 48% say individuals themselves (“by virtue of what news sources they use and how carefully they evaluate the news”).

Half of the American public believes someone else is responsible for feeding them the correct “understanding of the news.” Facebook had little to do with the erosion of the belief in individual responsibility, but it certainly feeling the impact of drifting away from the values upheld by the users of the 18th century social network.

A number of this week’s milestones in the history of technology showcase two prominent computer industry showmen, Steve Jobs and Thomas Watson Sr., their respective companies, Apple and IBM, and how they sold smart machines to the general public.

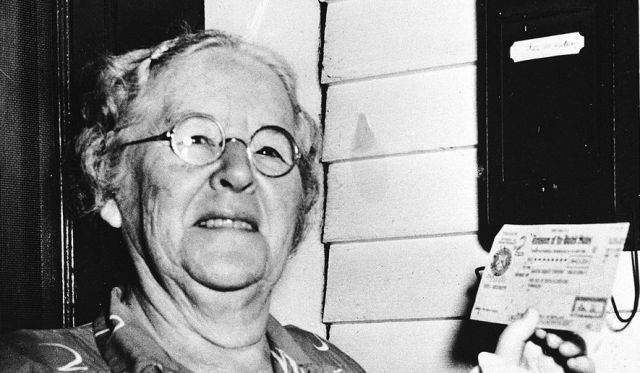

A number of this week’s milestones in the history of technology showcase two prominent computer industry showmen, Steve Jobs and Thomas Watson Sr., their respective companies, Apple and IBM, and how they sold smart machines to the general public. Thirty-five years ago this week, Apple introduced a computer that changed the way people communicated with their electronic devices, using graphical icons and visual indicators rather than punched cards or text-based commands.

Thirty-five years ago this week, Apple introduced a computer that changed the way people communicated with their electronic devices, using graphical icons and visual indicators rather than punched cards or text-based commands.

A number of this week’s milestones in the history of technology highlight the role of mechanical devices in automating work, augmenting human activities, and allowing many people to participate in previously highly-specialized endeavors—a process technology vendors like to call “democratization” (as in “democratization of artificial intelligence”).

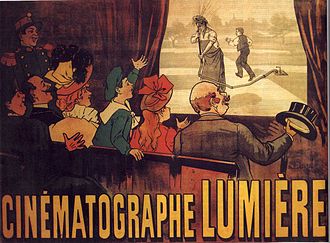

A number of this week’s milestones in the history of technology highlight the role of mechanical devices in automating work, augmenting human activities, and allowing many people to participate in previously highly-specialized endeavors—a process technology vendors like to call “democratization” (as in “democratization of artificial intelligence”). Two of this week’s milestones in the history of technology reflect the analog-to-digital transformation of film making and distribution.

Two of this week’s milestones in the history of technology reflect the analog-to-digital transformation of film making and distribution.